Ahead of all the big announcements at Ignite, Microsoft has dropped a major announcement: Azure will support Availability Zones. This brings a whole new set of architectural possibilities into play when thinking about cloud migration.

First – let’s address what an Availability Zone means. Azure Datacenters are regional – they exist in one (or more) locations within a geographic area, and are equipped with significant redundancy – there’s contingency plans in place for loss of grid power, and multiple data links, as any good datacenter should be. However, if you want to have a copy of your application running in a different building, you need to set up a secondary region, which is at least 500 miles from the first region. This can introduce a host of difficulties managing an application – you’ve just added several milliseconds of latency, and many applications just can’t tolerate that. For example, the database that holds your application’s data would need to either sacrifice accuracy (asynchronous commits) or sacrifice performance (synchronous, but highly latent commits). Azure availability zones seek to solve this problem.

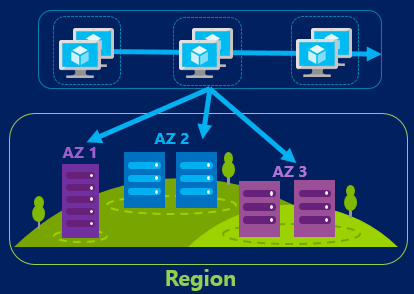

Azure Availability Zones are demarcated datacenters within a region – this is, if I’m not mistaken, very similar to Amazon’s approach. Three physical locations, close enough to maintain very low-latency connections, each host an Azure datacenter. You can choose to host your application across these three facilities, which allows an infrastructure architect to add a layer of redundancy without adding a whole new layer of complexity. Each facility has dedicated power, cooling, and network connectivity.

Realistically, it is rare for an entire region to go down – AWS had a cascading failure with S3 recently in one region, but it wasn’t a full region outage, technically. Microsoft had a storage issue of their own, but again, this wasn’t a region failure. The closest we’ve gotten is a Zone failure at AWS in Australia[footnote]Important note here about SLA. Amazon requires you to maintain a VM in more than one Zone in order to get an SLA (not an AWS guy, but this is what I read in the docs). The failure described here would not have an impact if correct architectural patterns had been followed, per AWS. Understand your SLA and understand what your responsibilities are, as a customer, to receive one![/footnote]. Microsoft has selected locations for their datacenters that are infrequently impacted by natural disasters – and this is part of the value of Availability Zones. While it’s rare for a natural disaster to wipe out a region, it’s far more likely that other natural disasters will hit, like this guy:

In addition to human and animal error, datacenters can also overheat, catch fire, and flood. By spreading out the workload within a local region, across multiple facilities, applications can achieve additional availability.

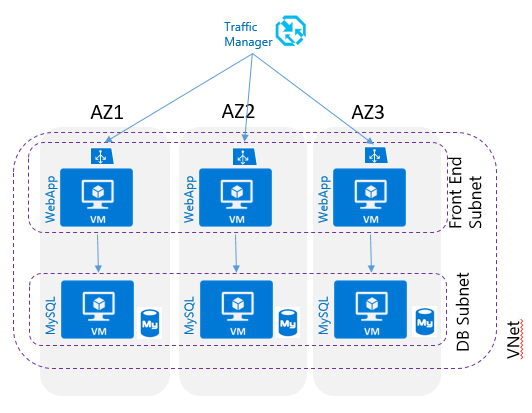

An important note – Availability Zones are different than Availability Sets. Availability Sets keep VMs on different physical hardware so that rack-level failures and updates don’t bring down the whole application – you wouldn’t want to run all 10 Web servers and 2 SQL servers in the same host. Availability Zones extend this in a way, allowing you to ensure that, in addition to mitigating host-level failure, your application is also protected against building-level failure. Availability Sets support a 99.95% SLA; Availability Zones will support 99.99% SLA when generally available! That’s a significant improvement that many of my customers will be quite pleased with.

From a technical perspective, the services that are supported in Availability Zones initially consist of IaaS functions – VMs, Managed Disks, NICs, and supporting infrastructure are supported. I expect to see this list expand as Microsoft adopts the architecture pattern internally and adds new features and capabilities. Aside – If you need a feature, make sure you request it here. Additionally, Managed Disks are required. If you haven’t already adopted Managed Disks, this is another great reason to migrate to using Managed Disks.

A sample architecture

It is not difficult to modify your templates to perform cross-zone deployments. The Zone is specified in the relevant resource, for example this disk:

{

"type": "Microsoft.Compute/disks",

"name": "myManagedDataDisk",

"apiVersion": "[variables('computeAPI')]",

"location": "[resourceGroup().location]",

"zones": ["1"],

"properties":

{

...

}

},

The same principle applies for a VM:

{

"apiVersion": "[variables('computeResouresApiVersion')]",

"type": "Microsoft.Compute/virtualMachines",

"zones": ["1"],

"name": "[variables('vmName')]",

"location": "[resourceGroup().location]",

"properties": {...}

}

This feature is currently in preview, so don’t start deploying applications across Availability Zones quite yet. Architectural patterns and best practices are still under development, and you’ll need to do some careful planning as to how to achieve your goals. Determining whether the application is best deployed Active/Active or Active Passive, whether the application can handle the (small) degree of latency introduced, and how to best handle scale are questions to think about. Additionally, you can only deploy into West Europe and East US 2, with a short list of SKUs. This will grow as the preview progresses.

There are terms that will be important to be able to differentiate:

- Regional Resource – this is a resource, such as a virtual network, that exists across a region – i.e. across multiple zones

- Zonal Resource – this is a resource, such as a virtual machine, that exists in a zone.

The Azure Availability Zones probably won’t have any significant impact on the cost of running an application in Azure IaaS, but one key aspect might – any data that crosses an AZ boundary is billable. This is in line with the practices of both Google and AWS.

For more information, you can visit the official documentation here, sign up for the preview here, visit StackOverflow, or reach out and I’ll do my best to answer any questions you have! If you’re visiting Microsoft Ignite, you can attend “Availability Zones: Design highly-available applications on Microsoft Azure” on Tue, 26th Sep (2:15 PM to 3:30 PM) and learn a whole lot about how to work on Availability Zones in one place!

Ignite 2017 Roundup | Nicholas Romyn's Blog

[…] awaited functionality to the lowly Azure Load Balancer. Up to 1000 VMs, region-wide load balancing (Availability Zones need this), and, the two big features: better monitoring, and the ability to balance all traffic, […]